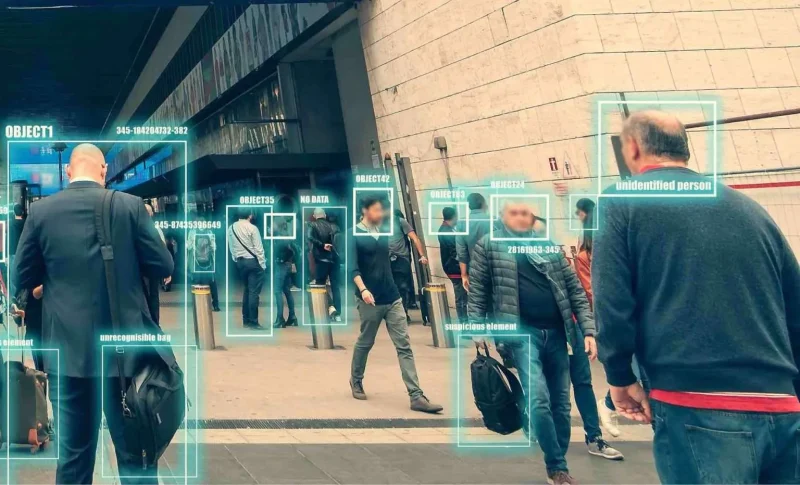

We've been taught to trust numbers and data. When an algorithm makes a decision, whether it's approving your loan, predicting crime risk, or deciding what news you see, it feels objective. Scientific. Unbiased.

But here's the problem: algorithms aren't neutral. They're designed by people, trained on imperfect data, and optimized for specific goals that may have nothing to do with fairness or accuracy. The technical complexity just makes these human choices invisible.

This matters because algorithmic systems now affect major parts of your life. And most people have no idea how they actually work.

Where the Bias Hides

When people say a decision is "data-driven," they make it sound automatic, like the computer just crunched numbers and spit out truth. But look closer at what actually happens:

At every single stage, people are making judgment calls. The algorithm just executes those judgments at scale, making them look like facts.

Real-World Impact

Criminal Justice Algorithms

Credit Scoring Systems

Social Media Algorithms

91-point gap affects loan approvals, interest rates, and financial opportunities

Black defendants nearly twice as likely to be mislabeled as high-risk

Why This Happens

Take the criminal justice example. COMPAS was trained on historical arrest data, data reflecting decades of racially biased policing. More arrests in Black neighborhoods don't necessarily mean more crime; they often mean more police presence. But the algorithm learned to treat arrest patterns as objective truth.

Or consider credit scoring: minority borrowers often have less data in their credit files (using alternative financial services, shorter credit histories). With less data the algorithm makes noisier predictions, but those predictions still determine who gets loans.

Social media is different but equally problematic. Platforms optimize for engagement: clicks, shares, time spent. Research shows this consistently amplifies emotional, divisive, and low-quality content because that's what keeps people scrolling. The algorithm isn't trying to inform you; it's trying to keep you on the platform.

None of this is a bug. It's what happens when you treat "data-driven" as a synonym for "objective."

What You Can Do

The goal isn't to reject technology or go back to "gut feelings." It's to stop treating algorithms like they're infallible and start treating them like what they are: tools built by humans with specific goals and limitations.

Ask Questions

Next time you encounter an algorithmic decision, ask: What data was this trained on? What is it optimizing for? Who built it, and what assumptions did they make?

Demand Transparency

Support laws requiring companies and governments to disclose how their algorithmic systems work, especially for high-stakes decisions like loans, sentencing, and content moderation.

Build Data Literacy

Learn basic concepts: correlation vs. causation, what training data means, how models can be biased. You don't need to code, just understand the fundamentals.

Stay Skeptical

When someone says a system is "data-driven" or "objective," treat it as a claim that needs evidence; not as proof of neutrality. Check the sources, question the metrics.

The Bottom Line

Algorithms are powerful tools. But they're not truth machines. They're shaped by human choices about data, design, and goals; these choices often stay hidden behind technical complexity.

Next time someone tells you a decision is "data-driven," ask them: Whose data? Measuring what? Optimized for which goal?